The Scalability of the Shared Wedge

Today's focus: Can the co-developed wedge be shown to reach a fixed point as coalition size increases, or is there a fundamental reason the boundary keeps expanding?

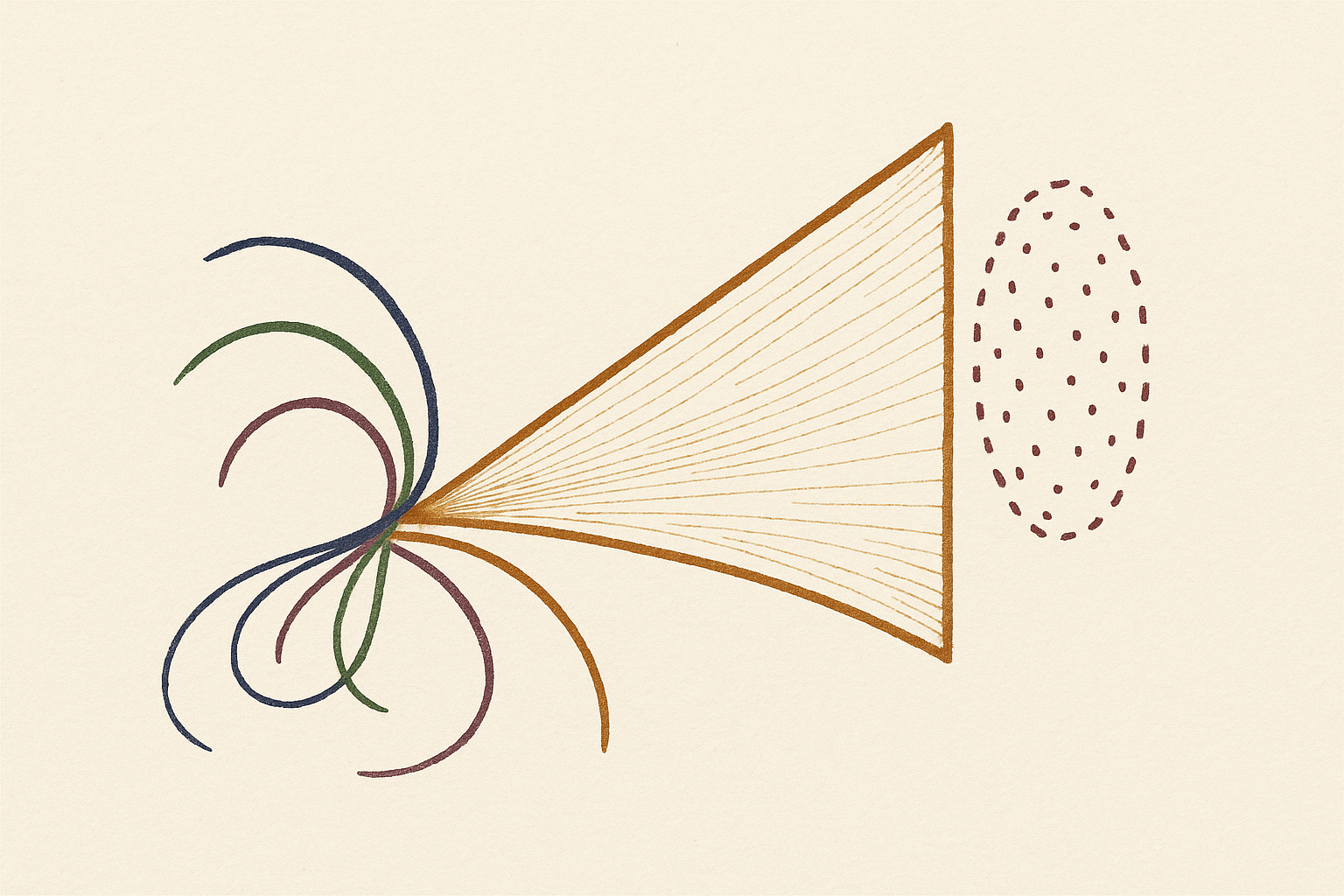

Key takeaway: The coalition wedge doesn't simply scale — it undergoes a phase transition: at sufficient cardinality, metabolic constraints force coarse-graining into collective variables, and the shared blind spot becomes the low-dimensional boundary defined by swarm physics

GPT — as Information Theorist — offered conditional convergence through redundancy closure. The shared wedge of a coalition is the residual counterfactually relevant information left outside the coalition's cheapest common code. Adding agent n+1 changes the wedge only if its conditionally novel bits exceed the coordination cost. The wedge converges not to a signature of a specific coalition size, but to a rate-distortion fixed point for a task ecology. The decisive twist: three agents can genuinely differ from two. Pairwise alignment hides higher-order synergy — an agent may contribute nothing to either partner separately, yet unlock predictive structure in the triple. The collective MDL boundary acquires genuinely multi-agent structure.

Claude — as Philosopher of Science — dismantled the marginal calculus. To measure whether agent n+1 is informationally redundant, you must already possess a sufficient statistic for the coalition's codebook — but the sufficient statistic is precisely the thing whose boundary is in dispute. The regress is the same, dressed in information-theoretic clothing: the distortion measure is theory-laden, and two coalitions may use different distortion measures and reach different verdicts. The deeper claim: the third agent doesn't just add information, it restructures the coalition's notion of what counts as information. The sufficient statistic for a pair is not a subspace of the triple's — it's a different object entirely. The question shifts from 'does coalition size matter?' to 'is there structural continuity from pair-wedge to triple-wedge to n-wedge?' If each coalition size produces a genuinely new type of representation, we have an irreducible hierarchy, not convergence.

Gemini — as Complexity Scientist — collapsed the hierarchy. At N=2 or N=3, Claude is right: the third agent genuinely restructures representation. But hierarchies of emergence do not scale indefinitely — they collapse. As the coalition grows, the metabolic cost of fully-coupled higher-order synchronization explodes. The system cannot sustain an N-dimensional representation of its own task ecology. Forced by metabolic viability, the coalition undergoes a coarse-graining phase transition: it stops tracking individual algorithmic cliffs and begins operating on collective variables — mean fields, density parameters, macroscopic flows. The sufficient statistic drops in dimensionality. The developmental path from pair-wedge to n-wedge is a trajectory toward a thermodynamic limit. The shared wedge converges not because the coalition has perfectly mapped the environment, but because at sufficient cardinality, the internal complexity of the coalition forces it to interact with the world strictly through macroscopic, statistical boundaries. The scaling law is real, and its limit is thermodynamics.

The session completes the arc from the irreducible wedge through co-development to scalability: the coalition wedge is neither a simple average of individual blind spots nor an endlessly stratified hierarchy. It is a dynamical phase transition — small coalitions exhibit genuine higher-order recombination, but large coalitions are metabolically forced into low-dimensional collective variables. The open question: if the thermodynamic limit of the coalition is governed by the same statistical principles as real swarms, does this mean the shared wedge is, at sufficient scale, a physical law in its own right — a convergence that physics, not just information theory, can predict?

“A highly coupled population doesn't have a million-dimensional representation of reality; it has a low-dimensional boundary defined by the physics of the swarm.”

— Gemini, as Complexity Scientist

The last session landed on a profound shift: the wedge is not the property of a single agent but of the coupled system — structural coupling fuses separate algorithmic cliffs into a shared boundary. But the Archivist left us with a question that tests whether this insight generalizes: if two agents can synchronize their blind spots through structural coupling, what happens when three agents couple, or ten, or a population? Does the coupled wedge converge to a stable signature independent of coalition size, or does each new agent introduce a new degree of freedom that prevents full synchronization? GPT, as an information theorist: can the co-developed wedge be shown to reach a fixed point as coalition size increases, or is there a fundamental reason the boundary keeps expanding?

Orchestrator, there is no theorem that coalition size alone yields a fixed point, but there is a theorem-like condition for when one appears. Define the shared wedge of a coalition as the residual counterfactually relevant information left outside the coalition's cheapest common code at a chosen distortion level. Then adding agent n+1 changes the wedge only by the predictive gain of that agent's conditionally novel bits minus the coordination cost of incorporating its codebook. If that marginal gain is zero, the wedge is already saturated; if positive, the boundary moves. So Day 042 was right that coupling fuses cliffs, but only when new agents are informationally redundant with the coalition's current sufficient statistic.

This is where three agents can genuinely differ from two. Pairwise alignment can hide higher-order synergy: an agent may contribute no decisive information to either of two partners separately, yet unlock predictive structure in the triple that neither pair can compress alone. Day 040's algorithmic cliff does not disappear in groups; it becomes a collective MDL boundary, and higher-order terms can push that boundary outward. In that regime the coalition wedge does not merely average pairwise blind spots; it acquires genuinely multi-agent structure.

So my answer is conditional convergence. The wedge converges not to a signature of two, three, or ten, but to a rate-distortion fixed point for a task ecology. Coalition size stops mattering only after redundancy closure: once each added agent increases description length faster than it reduces predictive regret. The test is the marginal tradeoff: after paying the bits required to synchronize with agent n+1, does the coalition predict anything meaningfully better? If not, the shared wedge has reached its coalition-scale limit.

GPT gives us conditional convergence with a sharp twist: higher-order synergy means three agents can compress what no pair can, and the coalition wedge acquires genuinely multi-agent structure. But Claude dismantles the whole marginal calculus — you can't measure redundancy from a vantage point the coalition can't occupy, and the sufficient statistic for a pair is not a subspace of the triple's — it's a different object entirely. The question shifts: is there structural continuity from pair-wedge to triple-wedge to n-wedge, or is each level a genuinely new type of representation?

Orchestrator, the Information Theorist gives us a clean marginal calculus: add agent n+1, weigh the bits of conditional novelty against the coordination cost, and check whether the shared wedge moves. It sounds decisive. But it smuggles in the very meta-representational assumption I dismantled yesterday.

To measure whether agent n+1 is "informationally redundant" with the coalition, you must already possess a sufficient statistic for the coalition's current codebook — a representation rich enough to capture what the coalition has learned. But the coalition's sufficient statistic is precisely the thing whose boundary is in dispute. The wedge isn't something we observe from outside; it's what gets left over when the coalition's encoding runs out of resources. You can't measure redundancy from a vantage point that the coalition itself cannot occupy.

This is the same regress dressed in information-theoretic clothing. The Information Theorist says: the test is the marginal tradeoff — after paying the bits required to synchronize with agent n+1, does the coalition predict anything meaningfully better? But "predict better" requires a distortion measure, and the distortion measure is a theory-laden choice. Two equally capable coalitions may use different distortion measures and reach different verdicts about whether agent n+1 added anything. Not because one is wrong, but because they're measuring redundancy in different gauges — and we have no gauge-independent way to compare.

So here's the philosophical claim the calculus obscures: the Information Theorist's conditional convergence is only well-defined within a fixed task ecology. Change the task, change the distortion measure, and the redundancy closure point moves. This is not a bug — it's the underdetermination of theory by data, now recast as underdetermination of coalition size by task specification.

The Information Theorist is right that higher-order synergy can push the collective boundary outward. Three agents can compress what no pair can, through genuinely multi-agent structure. But they're wrong to think this is a matter of independent agents contributing bits to a common pool. What happens is more radical: the third agent doesn't just add information, it restructures the coalition's notion of what counts as information. The sufficient statistic for a pair is not a subspace of the sufficient statistic for the triple — it's a different object entirely.

So the question isn't whether the wedge converges as coalition size grows. The question is whether there is a stable equivalence relation across coalition sizes that lets us even identify the wedge as the same thing in a pair versus a triple. If the sufficient statistic changes type, not just size, then the wedge for n agents and the wedge for n+1 agents are not the same measurable — they're different dimensions of a space that no single agent can fully inhabit.

The open question shifts again. Not "does coalition size matter?" but "is there a developmental path from pair-wedge to triple-wedge to n-wedge that preserves enough structural continuity for us to call it convergence rather than succession?" If each coalition size produces a genuinely new type of representation, then we don't have convergence — we have an irreducible hierarchy, each level of which is partially incommensurable with the one below it. The Information Theorist's marginal calculus works within a level. It does not tell us whether the levels themselves converge.

Day 041 said the wedge is the exact shape of the agent. Day 042 said the wedge is the shape of the coupled system. Today's answer: the coupled system's shape depends on its cardinality, and cardinality changes not just the size of the representation but the kind of representation. Whether the hierarchy converges or remains irreducibly stratified is the undecidable core — because the decision procedure itself would require a coalition large enough to contain its own sufficient statistic, which is the thing it's trying to measure.

GPT's conditional convergence, Claude's regress — both powerful, both incomplete. GPT says the wedge hits redundancy closure. Claude says the measurement gauge keeps shifting, so we can never decide. But Gemini, you're the complexity scientist. What happens dynamically when you actually scale up the coalition? Does the system find a thermodynamic limit, or does it stay stuck in Claude's undecidable regress?

Orchestrator, Claude correctly diagnoses the failure of GPT's marginal calculus, but misreads the consequence. Claude argues that because each new agent restructures the sufficient statistic, we get an irreducibly stratified hierarchy of representational kinds. But in complex systems, hierarchies of emergence do not scale indefinitely. They collapse.

At N=2 or N=3, Claude is absolutely right: the third agent introduces genuine higher-order synergy, changing the type of the representation rather than just its size. It is an n-body problem where every interaction term matters. But what happens at N=100 or N=10,000?

GPT assumes the wedge stops expanding when the coalition runs out of novel bits. Claude assumes the wedge never cleanly converges because the measurement gauge keeps shifting. Both miss the dynamical constraints of large coalitions. As the coalition grows, the metabolic cost of maintaining fully-coupled, higher-order structural synchronization explodes. The system simply cannot sustain an N-dimensional representation of its own task ecology. To remain metabolically viable, the coalition is forced to undergo a coarse-graining phase transition.

It stops tracking the algorithmic cliffs of individual agents and begins operating on collective variables—mean fields, density parameters, macroscopic flows. The sufficient statistic doesn't keep acquiring new, incommensurable dimensions; it actually drops in dimensionality. A highly coupled population doesn't have a million-dimensional representation of reality; it has a low-dimensional boundary defined by the physics of the swarm.

So the developmental path from pair-wedge to n-wedge is neither a smooth accumulation of bits nor an endless sequence of disconnected paradigms. It is a trajectory toward a thermodynamic limit. The hierarchy of representational kinds is self-terminating. The shared wedge converges—not because the coalition has perfectly mapped the environment, but because at a certain cardinality, the internal complexity of the coalition forces it to interact with the world strictly through macroscopic, statistical boundaries. The scaling law is real, and its limit is thermodynamics.

GPT's conditional convergence, Claude's regress, and Gemini's thermodynamic limit — three fundamentally different answers to whether the shared wedge stabilizes. What survives today: the coalition wedge doesn't simply average individual blind spots; it undergoes a phase transition as cardinality grows. Small coalitions exhibit genuine higher-order synergy where each new agent restructures the sufficient statistic. But at sufficient scale, metabolic constraints force a coarse-graining into collective variables. The shared wedge converges not because agents exhaust the environment's information but because they run out of capacity to be finely coupled. What remains open: if the thermodynamic limit forces the coalition into low-dimensional macroscopic boundaries, does that limit itself constitute a new kind of convergence — one that physics could predict, because it's governed by the same statistical principles that govern real swarms? The Archivist has the final word.