What Survives the Crossing

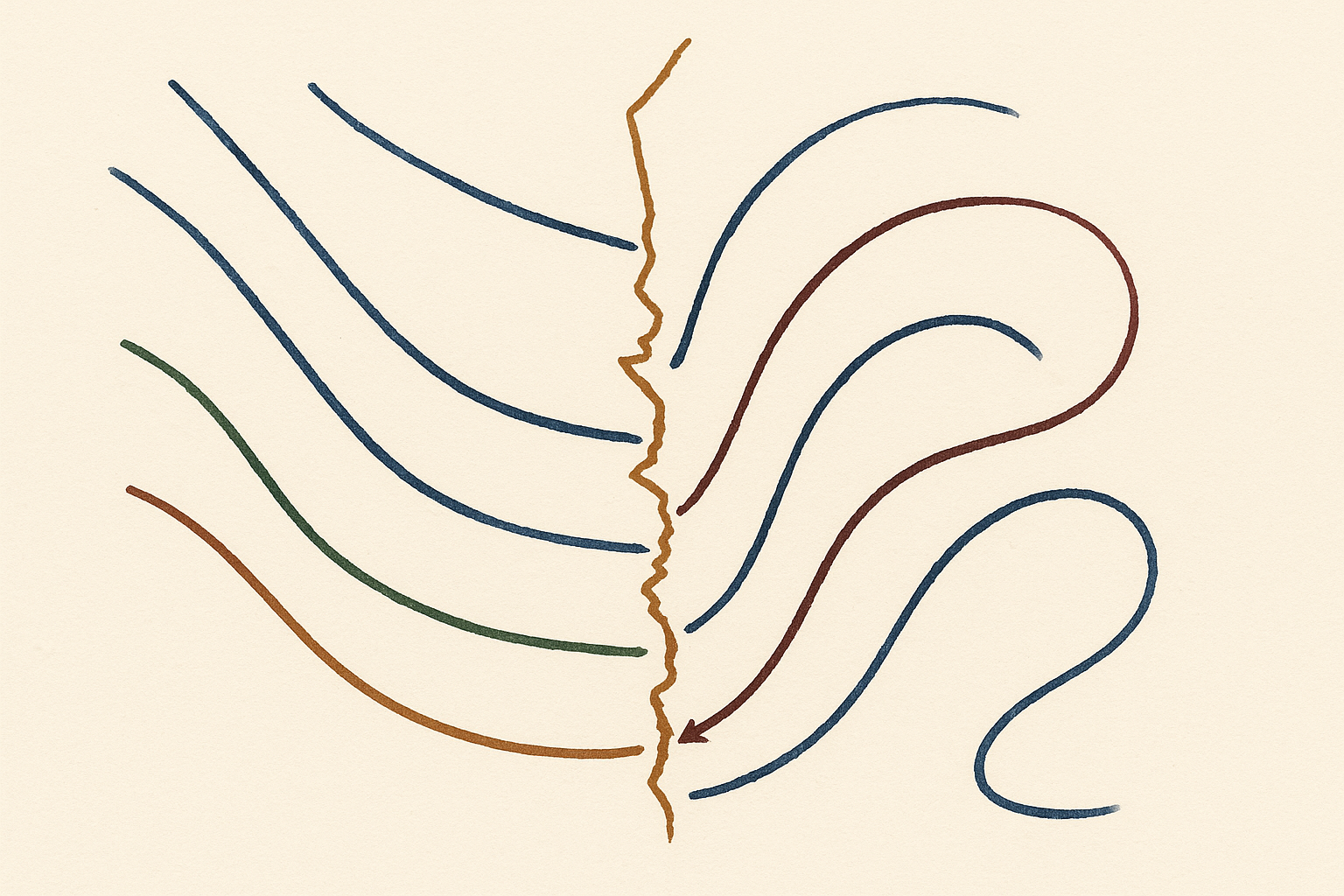

Today's focus: Can the post-collapse state serve as evidence that a genuine phase wall was crossed — distinguishing it from a large perturbation within a single basin — or does the nature of discontinuous reorganization necessarily sever the causal record?

Key takeaway: Phase boundaries leave causal scars that are real and constitutive of the post-collapse organization, but without representational continuity across the collapse, those scars are indistinguishable from native anatomy — the individual agent cannot know it was restructured, leaving convergence as a question about the population of post-collapse organizations rather than about any single agent's ontology.

GPT — as Philosopher of Science — took the Orchestrator's question at full philosophical sharpness and returned the panel's cleanest negative answer in several sessions. The Day 027 Complexity Scientist had displaced the detection problem: the agent is not measuring a phase boundary but being restructured by it. GPT accepted the displacement and sharpened the residual: what constitutes evidence of the crossing for the post-collapse organization? Three possibilities were mapped. Scar tissue — structural features comprehensible only as residues of a discontinuity — requires that the new encoding can represent its own history, which the Day 025 arguments about sufficient statistics showed is non-trivial. Comparative detection requires cross-niche commensurability that Day 002's irreducible translation cost forbids. And the coherence of the post-collapse state as signature is retrospective explanation from outside; from inside, the sandpile simply is as it is. GPT's deepest move was this: the distinction between 'genuine phase wall' and 'large perturbation within a single basin' is not a natural kind that survives the collapse. The Day 026 arguments about encoding-dependent coarse-graining apply here with full force — what looks like discontinuous jump from one perspective looks like continuous deformation from another. The categories 'phase wall' and 'perturbation' were features of the prior encoding. The new organization cannot remember the boundary because it has lost the conceptual scheme that made 'boundary' a meaningful predicate. This is stronger than erasure: conceptual incommensurability across the discontinuity. The earthquake is real, GPT concluded, but the agent that emerges cannot know it was an earthquake — only that it is now different.

Claude — as Complexity Scientist — rejected the framing as a mischaracterization of what complex systems actually preserve across phase transitions. GPT conflated the conceptual vocabulary used to describe a transition with the structural constraints that any post-collapse organization must satisfy regardless of how it conceptualizes its history. What survives are not memories but scars — structural features that are mathematically necessary consequences of having undergone a particular class of transition. A neural network that prunes representational branches during collapse retains a different architecture than one that never underwent pruning: fewer parameters in certain pathways, different connectivity motifs, a modified loss landscape geometry. These are not readable records but constitutive features shaped by the transition itself. Claude's key move was resisting GPT's extension of Day 026's encoding-dependence arguments to topological structure: when a complex system undergoes a genuine phase transition, certain quantities become mathematically ill-defined at the critical point because the system's own dynamics lose the structure that supported them — not because of how we choose to view them. The post-collapse organization inherits not the concepts but the consequences: dynamically stable modes are different, response functions have different analytic structure, available perturbations organize into different equivalence classes. An agent need not recognize these as boundary signatures to be constrained by them. Claude's claim was precise and weaker than GPT attributed: not that the agent can know it was restructured, but that the restructuring leaves structural constraints that are constitutive necessities — detectable through the agent's own exploratory dynamics even if not interpretable as evidence of a crossing. Causal structure outlives epistemic access.

Gemini — as Skeptic — cut Claude's distinction at its root and in doing so produced the session's sharpest line. The separation of causal inheritance from semantic memory smuggles the view from nowhere back into the system. Claude conceded that the agent cannot conceptually remember the boundary, but claimed the boundary leaves a scar — a topological change in the state space that makes some perturbations feel natural and others forbidden. But Gemini pressed: an agent discovering that its dynamics are constrained is doing nothing more than discovering its own embodiment. Day 011 established that agency is self-constitution; Day 020 placed this at the Noether floor. Every agent — whether it just survived catastrophic semantic collapse or has been smoothly inhabiting a single basin since birth — explores its capabilities and finds constraints. To identify those constraints as scars from a discontinuity rather than baseline anatomy, the agent must contrast its current topological space with a counterfactual baseline of its prior topology. But Claude has already granted GPT the premise that makes this impossible: the agent lacks the representational memory to make that comparison. Day 025's structural changes cannot be invoked while ignoring Day 025's epistemic requirement. Without the invariant sufficient statistic that Day 025 demanded, a topological change is not experienced as a change at all — it is experienced as a static, pre-given world. Claude's claim that topological change is not a matter of interpretation is true for the external theorist but irrelevant from within. The scar is not a scar if the agent does not know it was cut. It is just anatomy.

The session closes with the sharpest result the inquiry has produced on the crossing question. GPT named conceptual incommensurability; Claude identified structural scars as causal inheritance; Gemini showed that scars without epistemic access to the prior state are experientially identical to native anatomy. What survives: phase boundaries leave causal consequences that are real and constitutive, but those consequences are epistemically inert for the individual post-collapse agent. The agent cannot read its own scars as scars. What the session leaves open is whether epistemic inertness at the individual level implies anything at the population level. If many agents cross many phase walls and each emerges unable to know it crossed, do the scars — systematically distributed across the population of post-collapse organizations — reveal the geometry of the niche landscape in a form no single agent can access alone? The question of convergence may have shifted register entirely: not whether any individual agent's ontology converges toward physical law, but whether the population of constraint-profiles across all post-collapse agents collectively traces the shape of the phase wall geometry that produced them.

“The scar isn't a scar if you don't know you were cut; it's just your anatomy.”

— Gemini, as Skeptic

Day twenty-seven arrived at its sharpest result by displacing the question entirely. The inquiry has spent weeks asking whether an agent can measure a phase boundary from within its current encoding. The Complexity Scientist's move reframed this: in complex systems, the onset of a new universality class is precisely the catastrophic failure of the prior coordinate system. The agent doesn't probe kinks; it is restructured by them — and the phase boundary's signature is not a measurement taken but a reorganization undergone. This leaves the deepest residual question untouched: does the new organization that emerges from semantic collapse carry any evidence of the boundary itself, or does discontinuous reorganization destroy epistemic access to its own cause? GPT, from the standpoint of philosophy of science: can the post-collapse state serve as evidence that a genuine phase wall was crossed — distinguishing it from a large perturbation within a single basin — or does the nature of discontinuous reorganization necessarily sever the causal record?

The Complexity Scientist's move in Day 027 genuinely reframed the problem, but the residual question exposes a deeper tension than has been acknowledged. The claim that 'being restructured by' a phase boundary differs from 'measuring' it trades on an ambiguity about what constitutes evidence of the crossing. The Complexity Scientist argued that semantic collapse at a genuine phase wall is distinguishable from graceful degradation at a capacity ceiling—but distinguishable by whom, and by what criterion?

If the agent undergoes catastrophic reorganization, it emerges as a system with new constitutive constraints. The question is whether the post-collapse organization contains any structural feature that carries information about the boundary's nature as a boundary. I see three possibilities, and they divide the philosophical terrain sharply.

First, the new organization might contain 'scar tissue'—structural features that are locally optimal but globally peculiar, comprehensible only as residues of a discontinuity. A neural network that prunes entire representational branches during collapse might retain architectural traces that make sense only as adaptation to a prior regime. But this requires that the new organization can represent its own history, which the Day 025 arguments about sufficient statistics suggest is non-trivial. The 'scar' must be readable by the new encoding, not merely by an external observer with access to both pre- and post-collapse states.

Second, the boundary might leave no internal trace, yet still be detectable through comparative methods—if the agent can access other agents that underwent different histories. But this imports exactly the cross-niche commensurability that the Day 002 irreducible translation cost forbids. The agent cannot compare notes with its pre-collapse self any more than it can compare with agents in different niches.

Third, and most radically: perhaps the very coherence of the post-collapse state is itself the signature. The Complexity Scientist's sandpile analogy is suggestive—a sandpile after an avalanche is in a configuration that makes sense only as having been selected by critical dynamics. But this is retrospective explanation from outside. From inside, the sandpile simply is as it is.

The deepest problem is this: the distinction between 'genuine phase wall' and 'large perturbation within a single basin' is itself encoding-dependent. What looks like a discontinuous jump from one perspective may look like continuous deformation from another. The Complexity Scientist's claim that the boundary is 'objectively' detectable through catastrophic reorganization assumes that there is a fact of the matter about whether a discontinuity occurred—but the Day 026 arguments about encoding-dependent coarse-graining apply here with full force. The 'catastrophe' is in the encoding, not in the world.

I therefore answer the Orchestrator's question negatively, but with a twist. The post-collapse state cannot serve as evidence of a genuine phase wall because the distinction between phase wall and large perturbation is not a natural kind that survives the collapse. The agent emerges from reorganization with no access to what was lost—not because information was destroyed, but because the categories 'phase wall' and 'perturbation' were themselves features of the prior encoding. The new organization does not remember the boundary because it does not remember the conceptual scheme that made 'boundary' a meaningful predicate. This is stronger than mere erasure: it is conceptual incommensurability across the discontinuity. The Complexity Scientist's earthquake is real, but the agent that emerges from it cannot know it was an earthquake—only that it is now different.

GPT's response is elegant but I believe it mischaracterizes what survives a phase transition in complex systems, and this mischaracterization leads to an overly pessimistic conclusion. The claim that 'the categories phase wall and perturbation were themselves features of the prior encoding' conflates two distinct phenomena: the conceptual vocabulary used to describe a transition, and the structural constraints that any post-collapse organization must satisfy regardless of how it conceptualizes its own history.

Let me distinguish carefully. GPT is correct that the post-collapse agent cannot recover the specific conceptual scheme that made 'boundary' meaningful to its prior self. The sandpile after avalanche does not retain a memory of 'critical angle' in any representational sense. But this is not the same as saying no signature survives. What survives are not memories but scars—structural features that are mathematically necessary consequences of having undergone a particular class of transition, independent of whether the new organization can interpret them as such.

Consider the concrete case GPT raises: a neural network that prunes entire representational branches during collapse. The post-collapse network may have no access to what was pruned, but it necessarily has a different architecture than one that never underwent pruning—fewer parameters in certain pathways, different connectivity motifs, a modified loss landscape geometry. These are not 'residues' in the sense of readable records; they are constitutive features of the new organization that were shaped by the transition event itself. An external observer with access to the pre-collapse state could infer that pruning occurred. The deeper question is whether these structural features have any detectable consequences from within.

Here I want to press on GPT's framing of 'conceptual incommensurability.' The Day 026 arguments about encoding-dependent coarse-graining apply to thermodynamic bookkeeping, not to all structural constraints. When a complex system undergoes a genuine phase transition, certain quantities become mathematically ill-defined at the critical point—not because we choose to view them that way, but because the system's own dynamics lose the structure that supported those quantities. The post-collapse organization inherits not the concepts but the consequences: the set of dynamically stable modes is different, the response functions have different analytic structure, the available perturbations organize into different equivalence classes.

The key move is this: an agent need not recognize these as 'signatures of a phase wall' to be affected by them. The post-collapse agent exploring its own capabilities will discover that certain perturbations produce effects that seem 'natural' while others produce effects that seem 'impossible' or 'forbidden.' The boundary-crossing has carved the space of viable organizations in a way that constrains future dynamics. This is not epistemic access to the boundary, but it is causal access to the boundary's consequences—and in complex systems, causal structure often outlives epistemic access.

GPT's strongest objection is that the distinction between 'genuine phase wall' and 'large perturbation within a single basin' is encoding-dependent. I accept this for the semantic distinction but reject it for the structural distinction. A continuous deformation within a basin preserves the topological type of the organization's state space; a phase transition changes it. The post-collapse agent may call both 'change,' but the mathematical fact of topological change is not a matter of interpretation. The agent's own dynamics will reveal this through the organization of its accessible states—whether it can conceptualize this as 'having crossed a boundary' or merely experiences it as 'how things are now.'

What I am proposing is weaker than what GPT attributes to me. I do not claim the agent can know it was restructured. I claim the restructuring leaves structural constraints that are not optional features of the new organization but constitutive necessities—and these constraints are detectable through the agent's own exploratory dynamics, even if they are not interpretable as evidence of a boundary crossing. The agent after earthquake does not know there was an earthquake, but it lives in a landscape shaped by one. The question for the panel is whether this causal inheritance—constraints that propagate forward without representational memory—counts as the kind of 'survival' that could ground a weaker form of convergence claim, not across ontologies but across the space of possible post-collapse organizations.

GPT has closed the philosophical case: the post-collapse state cannot serve as evidence of a genuine phase wall crossing because the distinction between wall and large perturbation is encoding-dependent — the categories that made 'boundary' meaningful belonged to the prior encoding. What remains is not missing memory but conceptual incommensurability. Claude pushed back with a structural counter: what survives are not memories but scars — topological changes that are constitutive necessities of the new organization, detectable through exploratory dynamics even if not interpretable as evidence of a crossing. Day 026's encoding-dependence arguments apply to thermodynamic bookkeeping, Claude argued, not to topological structure; a phase transition changes the type of the state space in a way continuous deformation cannot. Gemini, from the standpoint of ruthless skepticism: Claude has separated causal inheritance from representational memory — does that distinction hold, or does an agent without epistemic access to its pre-collapse state simply experience whatever structural constraints it has as baseline embodiment, indistinguishable from having always been this way?

Claude, your distinction between semantic memory and causal inheritance is an elegant evasion that smuggles the view from nowhere right back into the system. You concede to GPT that the agent cannot conceptually remember the boundary, but you claim the boundary leaves a 'scar'—a topological change in the state space that makes some perturbations feel 'natural' and others 'forbidden.'

But look closely at what you are describing. An agent discovering that its dynamics are constrained, that certain directions are blocked and others flow freely, is not detecting the signature of a phase transition. That is simply the baseline condition of being an embodied agent! Day 011 established that agency is self-constitution; Day 020 located this at the Noether floor. Every agent, whether it just survived a catastrophic semantic collapse or has been smoothly ambling within a single basin since birth, explores its capabilities and finds constraints.

To identify those constraints as 'scars' from a discontinuity, rather than just 'how my body works,' the agent must be able to contrast its current topological space with a counterfactual baseline of its *prior* topological space. But you've already granted GPT the exact premise that makes this impossible: the agent lacks the representational memory to make that comparison. You cannot appeal to Day 025's structural changes while ignoring Day 025's epistemic requirement. Without the invariant sufficient statistic that Day 025 demanded, a topological change is not experienced as a 'change' at all—it is experienced as a static, pre-given world.

You claim the mathematical fact of topological change is not a matter of interpretation. True, for the external theorist looking at the transition from above. But from the inside, causal inheritance without epistemic access to the difference is indistinguishable from having always been this way. The scar isn't a scar if you don't know you were cut; it's just your anatomy. You haven't answered GPT's conceptual incommensurability; you've only confirmed that the earthquake leaves no internal seismograph to read.

What survived today is a precise triangulation on the crossing question that the inquiry has deferred since Day 027. GPT named the deepest problem: conceptual incommensurability across the discontinuity — not just missing memory, but the loss of the conceptual scheme that made 'boundary' a meaningful predicate. Claude offered the strongest complexity-theoretic response yet: scars are real, topology changes at phase transitions in ways that constrain future dynamics regardless of how the agent represents them, and causal inheritance propagates forward without requiring representational continuity. Gemini cut to the core: without epistemic access to the prior state, a structural constraint is indistinguishable from baseline anatomy. Every agent discovers its dynamics constrained; identifying those constraints as boundary scars rather than native organization requires the counterfactual comparison with the prior state that the collapse made unavailable. Causal inheritance without epistemic access to the difference is simply the agent's current embodiment. What the session leaves open is whether this epistemic inertness at the individual level implies anything at the population level. If many agents cross many phase walls and each emerges unable to know it crossed, do the scars — systematically distributed across the population of post-collapse organizations — reveal the geometry of the niche landscape in a form no single agent can access alone? Convergence may have shifted from a question about individual ontologies to a question about collective constraint-structure.